Time to delete 1,000 rows, as well as the size of the respective transaction.Of them, to keep things as similar as possible.Ģ - A round of 10,000 records is inserted and the following rounds of deletes I tried to choose one for each that allows me to store the exact same data in each The data type of the wide column is not exactly the same across all RDBMSs, but The syntax for the auto increment id varies within each RDBMS, but it is just **id INT NOT NULL PRIMARY KEY AUTOINCREMENT, The wide column, with a structure similar to this: The scenario for the test goes like this:ġ - A simple test database is created in each DB instance and a table containing I used the latest available version of each product to keep things as fair as Information related to the RDBMSs and their respective runs Tuning, or specific parameter settings to possibly help improve performance. RDBMS setup is that I tested them right out of the box, without any best practices, That has the exact same specs for the data type of the wide column, I tried toĬhoose something as homologous as possible between them.

Things as fair as possible, I'm performing each test in a Linux virtual machine The tests against a SQL Server, MySQL and PostgreSQL database instance. Initial considerationsĪs you probably already have guessed by the title of this article, I'm performing The tests will be done against a set ofġ0,000 records in a table that only has an id column and a wide string (1,000,000Ĭharacters to be precise).

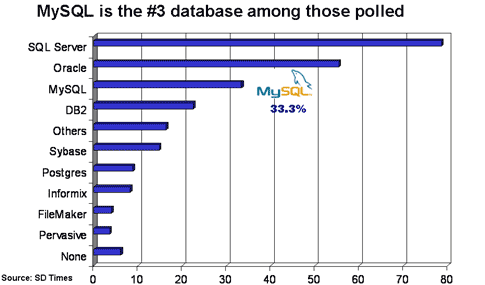

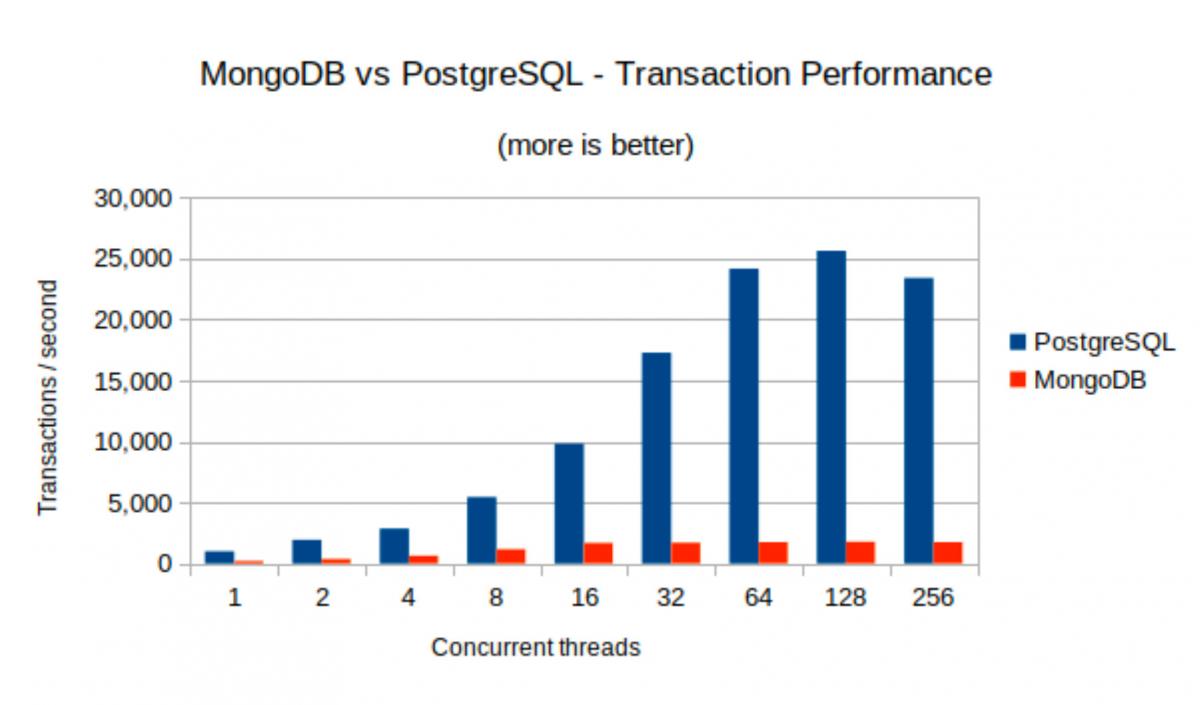

Records deletions within each respective RDBMS. And that's one of the things that frustrates me, actually, about blogging or just the Internet in general.In this tip I will be presenting the results I compiled after performing MySQL is the only database I've ever programmed against in my career that has had data integrity problems, where you do queries and you get nonsense answers back, that are incorrect. Joel also said in that podcast that comment would come back to bite him because people would be saying that MySQL was a piece of crap - Joel couldn't get a count of rows back. Which it probably doesn't, so just pick whichever database you like the sound of and go with it better performance can be bought with more RAM and CPU, and more appropriate database design, and clever stored procedure tricks and so on - and all of that is cheaper and easier for random-website-X than agonizing over which to pick, MySQL or PostgreSQL, and specialist tuning from expensive DBAs. It's astonishing how expensive good DBAs are and they are worth every cent. If it really matters, test your application against both." And if you really, really care, you get in two DBAs (one who specializes in each database) and get them to tune the crap out of the databases, and then choose. So if your decision factor is, " which is faster?" Then the answer is "it depends. It goes on to link to a number of performance comparisons, because these things are very. PostgreSQL is relatively slow at low concurrency levels, but scales well with increasing load levels, while providing enough isolation between concurrent accesses to avoid slowdowns at high write/read ratios. On the other hand, it exhibits low scalability with increasing loads and write/read ratios. There's this discussion addressing your "better" questionĪpparently, according to this web page, MySQL is fast when concurrent access levels are low, and when there are many more reads than writes. PostgreSQL is a much more mature product. MySQL is much more commonly provided by web hosts. Software can change rapidly from version to version, so before you go choosing a DBMS based on the advice below, do some research to see if it's still accurate. That's nearly 11 years ago as of this edit. A note to future readers: The text below was last edited in August 2008.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed